And what we can do to fix it.

Surveys are an essential tool that systems can use to gather vital information to know more about the people they are serving to better meet needs and determine whether what they are doing now is effective. Asking for information about age, ethnicity, culture, sexuality, religion, religiosity, and habits are common practices and important to understand in making decisions about programming. Providing surveys to children and teens asking for this information is entirely acceptable if parental consent is obtained and there is clarity on how the information is going to be used.

The issue with our District is that surveys are terribly designed and the information gathered is often, if not always, used to push political or pre-determined policy/curriculum objectives. In other words, surveys are designed to make it easy for the District to use the data however they want to advance their message instead of using it to learn more about our students and whether the programming or approaches they have in place are effective.

The word-study (or whatever it’s called) survey was a joke; let’s call it what it was. A set of questions asking whether parents believe that things like phonics and writing are important. What parents would say they are unimportant? How could the information from such a poorly designed instrument be used? Well, if the organization collecting the information wanted to say something like:

“Parents said that kids learning to use words is important, and this new curriculum helps kids learn words, so that’s why we went with it”

This avoids the hard work of engaging parents in more difficult conversations about the options available and the risk that parents would come to a different conclusion about which option should be pursued than what the District had in mind.

Let’s look at the District’s new “climate survey” – using the term very, very, very loosely. (Based on screen shots parents have posted)

I’ll focus on three items/questions students are asked to respond to so that I can illustrate a couple of points:

(1) “I feel safe at school”

An answer to no on this question can mean a million things. Some people might interpret safety within the context of crime. Some might answer this question in relation to their peer’s behavior, while others authority figures. Others might answer this question with an understanding that school is safe, but they don’t feel safe because they struggle with social anxiety (for example). So the term safety is left to mean however the respondent understands it, which then turns into it meaning however the District wants to define it.

“Kids don’t feel safe, so we will pull in more police.”

“Kid’s don’t feel safe, so we are going to involve outside programs and apps to track them.”

“Kids don’t feel safe so we will add more diversity programming.”

“Kids don’t feel safe, so we will increase mental health staff and resources spending.”

Most people looking at the list above will be happy with some or all of these interventions however we have no way of knowing if any of them address the issue a student is facing.

“(2) Mental Health -please check all that apply

I have received support for a mental health concern

I struggle with my Mental Health but have not sought out support

Overall, I feel good about my mental health.

Prefer not to answer

Other”

Nowhere does this address the quality of mental health support available, and the responses assume the availability of such support. More valuable first questions would be, “Do you feel mental health services are accessible in your school?” and “How would you rate the quality of mental health services in your school” -these would provide far better information.

In both of these items, it’s not that the questions themselves are bad but they stop way short in a way that allows whoever reports on the data to justify meaning and action in a way that may not be connected to what the respondent means. So either the questions need to change or more questions need to be added. It is a basic thing to understand in research – when attempting to measure abstract concepts we need to break it up into multiple observable pieces that accurately reflect the concept. From there we may need to do more analysis because we may be wrong in how we defined it.

(3) “I feel confident that the school disciplinary process is fair and holds students accountable for their actions.”

This is a basic survey design issue- attempting to measure two variables with one question. What if a student feels the school disciplinary process is fair but does not hold students accountable? How is “fair” or “accountable” defined – we run into the same issue of operationalization as we saw with the safety question but with another problem.

Another issue, going off of memory here, is that I believe the survey asks students if they feel safer in school than they did four years ago. Again with “safer” being undefined and just where a kid was developmentally four years ago to where they are today – the question is literally meaningless.

Lastly, surveys are generally confidential – not anonymous. Identities are protected by assigning a numerical identifier. The reason this is important has to do with the integrity of the data set. Anonymous means there is no tracking of who is filling out the survey – so then how do we know the respondents are even students? How do we know one student isn’t completing the survey more than once? There might be a technological work around the District is using in associating a student account to the responses to ensure integrity but then that means the responses are not anonymous – it would just take some time to figure out who entered what. In any case this has to be explained more clearly.

There are other problems with the survey, but I think this is enough to demonstrate the point.

I can fully understand parents’ frustrations about surveys and their legitimate concerns about how this information will be used. The District has been exploitative and misleading with information its gathered from pretty much every survey it’s completed but let’s focus on the real issue (the practice of our District) and move away from the idea that surveys are not something School Districts should engage in.

Now the response to this article from our District might be:

“Real surveys would cost too much.”

But that’s not true.

New Jersey has a lot of great universities with strong sociology, social work and psychology programs. I’m sure there are plenty of Graduate students that would jump at the opportunity to do good work in our schools. Maybe even our Students at GL might help us do a better job at this (assuming they weren’t involved with this most recent instrument-if they were, they need better guidance ).

Both the Superintendent and one Assistant Superintendents have Doctoral degrees – they should be able to do the work themselves (unless their Doctorates did not involve research requiring instrument design or statistical analysis). SPSS is not expensive, and we could set up a better survey process cheaply.

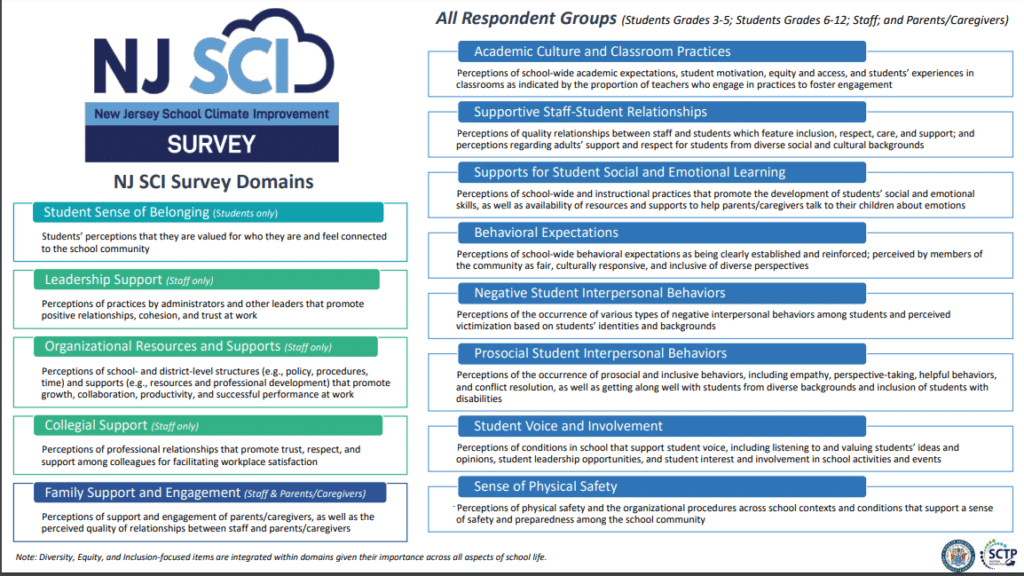

Another option is the NJ School Climate Improvement Survey, which the website advertises as free. I’m assuming that the subjects many parents are upset about with our current survey are on there but those items can be pulled out and made into a separate instrument. Also, if you still think DEI cannot be measured – this survey integrates DEI throughout it’s domains. I guess whoever developed this instrument is also a segregationist.

I have even offered to help on this initiative but you probably know how that went.

But again, it’s important to iterate – schools or organizations asking about religion, sexuality, ethnicity, race, safety- those are all good things if used to advance a student’s best interest. How can an organization best meet the needs of its stakeholders without having an evidenced picture of who they are? Your child reading that gay exists isn’t going to destroy their lives, but if they are struggling with their sexuality (and that can happen in middle school) wouldn’t you want a context that is interested in helping them feel included? Your child being asked if they experienced racism in their school isn’t going to turn them into a left-wing communist and if they haven’t heard that term before, well it might create a good opportunity to start talking about it because – you know- it’s a real thing that exists.

However, when the public has little confidence in the institutions asking for this information because of privacy concerns or how it feels the information will be distorted- it becomes a different story.

Both sides of this issue often make this kind of thing about ideologies or their own interests when it’s really about best practice (I mean real best practice, not what our District means when they use the term) and meeting the needs of students.

Related Content:

COMMENTS ON RECENT DATA PROVIDED BY THE BH SCHOOL DISTRICT ON RECONFIGURATION

|